Monitor, Debug & Audit Your Valkey

Persistent observability for Valkey — debug incidents yourself or with your agent, hours after they happen, or be proactively notified via webhooks before they start. Redis-compatible.

Or self-host:

claude mcp add betterdb -- \ npx @betterdb/mcp betterdb-mcp \ --autostart --persist

Adds BetterDB as an MCP server to Claude Code. Starts monitoring automatically.

Then ask Claude about your database health, slow queries, or memory usage.

See what's actually happening inside your Valkey instance

Historical data that survives a log rotation. Client-level attribution. Automatic anomaly detection.

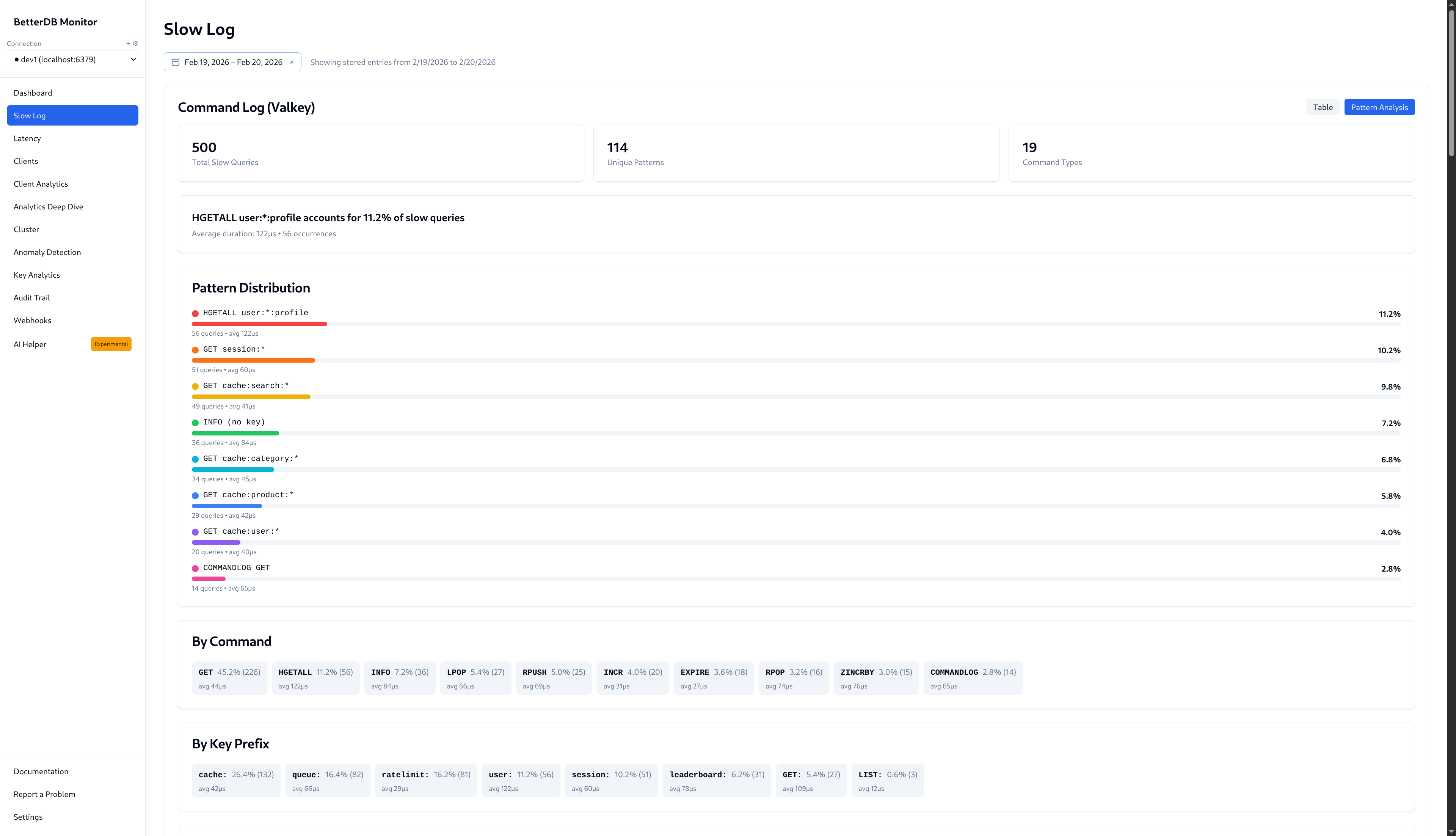

500 slow queries, 114 unique patterns, 19 command types — across any historical time range. The evidence that used to disappear after a log rotation.

Built for humans and agents alike

Investigate incidents yourself — or point your agent at BetterDB and walk away.

Your agent investigates

Connect BetterDB's MCP server to Claude Code, Cursor, or any MCP-compatible client. Ask questions in plain language. Get back structured diagnostics — slowlog history, anomaly summaries, latency trends, ACL audit — without opening a browser.

{

"mcpServers": {

"betterdb": {

"command": "npx",

"args": ["-y", "@betterdb/mcp"]

}

}

}You investigate

Full dashboard, slowlog pattern analysis, anomaly detection, client attribution, and vector search — everything you need to find what went wrong and why, with data that persists beyond the log rotation.

Everything you need to understand your Valkey instance

Deep insights into your Valkey deployment with minimal overhead.

COMMANDLOG Support

Valkey 8.1+ exclusive. See every command executed, not just slow ones.

Anomaly Detection

Automatic detection of unusual patterns across memory, CPU, and connections.

Historical Analytics

Query what happened at 3am, not just what's happening now.

Cluster Visualization

Interactive topology graphs, slot heatmaps, and migration tracking.

ACL Audit Trail

Track who accessed what for compliance and debugging.

Slowlog Patterns

See that GET user:* is 80% of your slow queries.

Key Analytics

Analyze key patterns by namespace and key type.

Client Analytics

See which clients consume resources. Detect unusual buffer sizes and connection spikes.

Prometheus & Webhooks

101 Prometheus metrics plus Slack, email, and webhook notifications.

AI Assistant

Ask questions about your Valkey instance in plain English.

Vector Search

Inspect vector indexes, browse embeddings, and find semantically similar entries with similarity scores.

MCP Server

Access BetterDB's full functionality from any MCP-compatible client — Claude Code, CLI agents, or IDEs.

View on registry →Hot Key Tracking

Top-50 keys by access frequency with rank movement over time. Spot what's climbing before it becomes a bottleneck.

Latency Monitoring

Per-event latency history across P50/P95/P99. Know when your instance started slowing down, not just that it is.

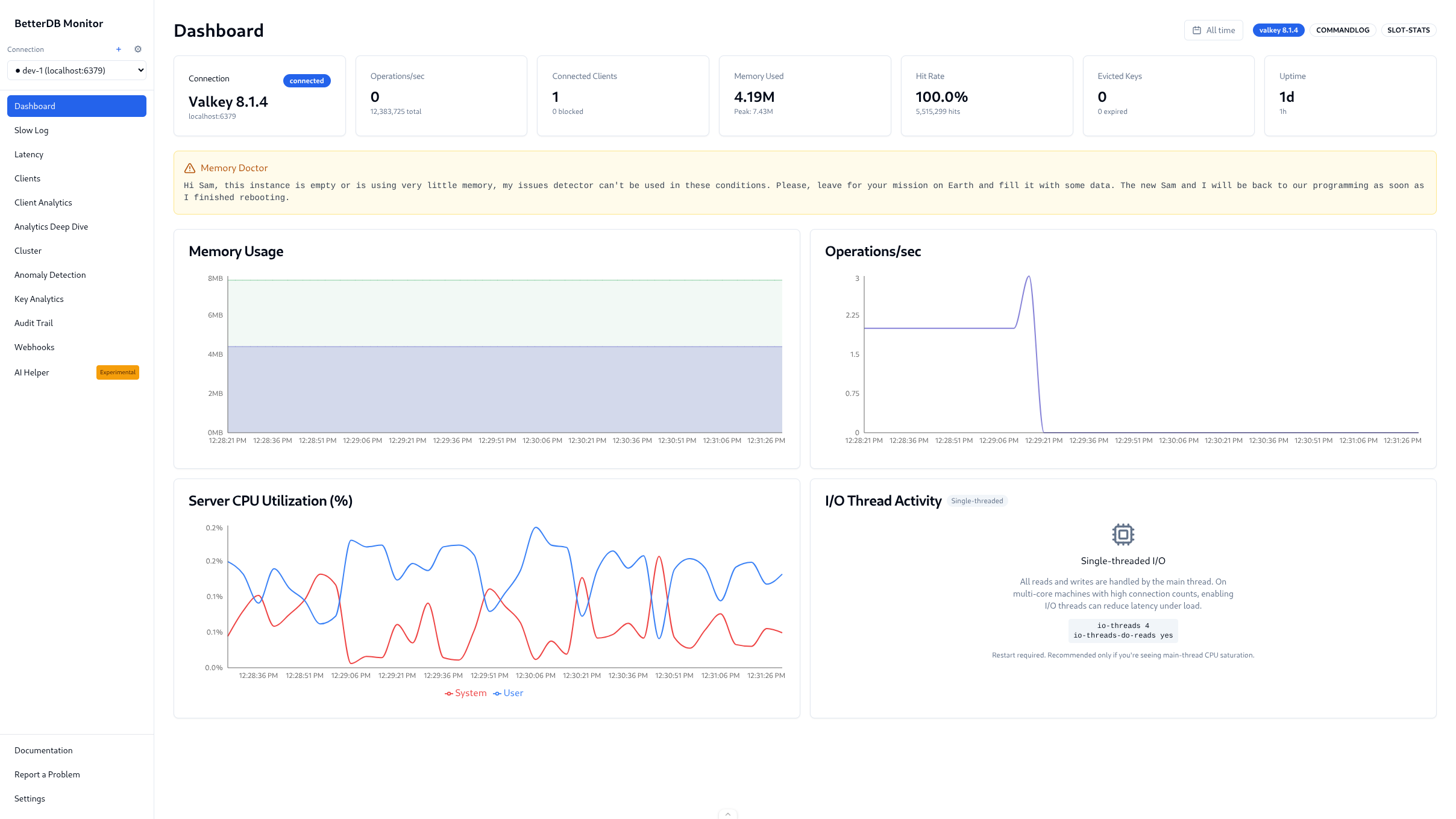

CPU & I/O Threads

Per-thread I/O metrics and CPU utilization charts. Valkey-specific visibility no Redis tool can provide.

Semantic caching — the only one built for Valkey

@betterdb/semantic-cache drops into any LLM app and cuts API costs by serving semantically similar responses from Valkey. No framework lock-in. And because it runs on the same Valkey instance you're already monitoring, BetterDB surfaces hit rate, similarity scores, and cost savings automatically.

Valkey-native

Handles valkey-search API differences explicitly. Works on ElastiCache, Memorystore, or self-hosted. Not a Redis port.

Standalone

Any LLM client — OpenAI, Anthropic, local model. No LangChain, no LiteLLM required.

Full observability

Every check() and store() emits OTel spans and Prometheus metrics. Hit rate, similarity scores, latency — zero extra instrumentation.

Every other library makes you pick two.

| Library | Valkey-native | Standalone | Built-in OTel + Prometheus |

|---|---|---|---|

| @betterdb/semantic-cache | ✅ | ✅ | ✅ |

| RedisVL SemanticCache | ❌ Redis only | ✅ | ❌ |

| LangChain RedisSemanticCache | ❌ Redis only | ❌ Requires LangChain | ❌ |

| LiteLLM redis-semantic | ❌ Redis only | ❌ Requires LiteLLM | ❌ Partial |

| Upstash semantic-cache | ❌ Upstash only | ✅ | ❌ |

| Redis LangCache | ❌ Redis Cloud only | ❌ Managed only | ✅ Dashboard only |

import Valkey from 'iovalkey';

import { SemanticCache } from '@betterdb/semantic-cache';

const cache = new SemanticCache({

client: new Valkey({ host: 'localhost', port: 6399 }),

embedFn: yourEmbedFn, // OpenAI, Voyage, Cohere, local — anything

});

await cache.initialize();

await cache.store('What is the capital of France?', 'Paris');

const result = await cache.check('Capital city of France?');

// result.hit === true — LLM call skippedOur goal: zero Valkey hacks

If you've ever written a custom script, stitched together three tools, or just accepted that something wasn't observable - we want to hear about it. Tell us what's missing. We'll build it, and the next person won't have to figure it out themselves.

Book 15 min with KristiyanPrefer email? kristiyan@betterdb.com

Launch Special — Use license key beta for full Pro features

Ready to get started?

Start monitoring in minutes — no infrastructure to maintain. Team collaboration, agent-based monitoring for private databases, and more. Or self-host — open source core, zero lock-in.